So you’ve built your model, and now you want to deploy it for testing or to show it to someone like your mom or grandpa, who may not be comfortable using a collaborative notebook? No worries at all…

PDF data extraction can be a real headache, and it gets even trickier when you’re trying to snag underlined text — believe it or not, there aren’t any go-to solutions or libraries that handle this…

Large language models (LLMs) are gaining increasing popularity in both academia and industry, owing to their unprecedented performance in various applications. As LLMs continue to play a vital role…

What are Slowly Changing Dimensions and how to implement them in Data Warehouse. Difference between SCD Types 0, 1, 2, 3 and 4 and how they affect pipelines

When I discovered Shiny years ago, I got immediately hooked. Shiny is an R package to build interactive web applications that can run R code in the backend. I was fascinated by the ability it…

Welcome back to a new chapter of “Courage to Learn ML.” For those new to this series, this series aims to make these complex topics accessible and engaging, much like a casual conversation between a…

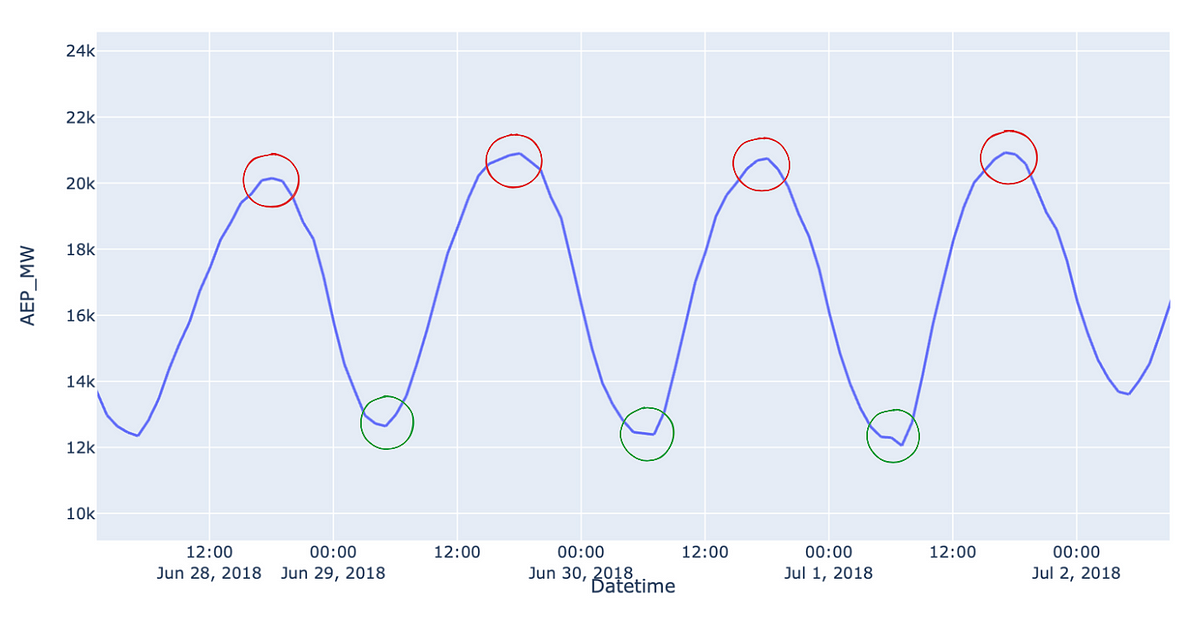

Cyclical Encoding: An Alternative to One-Hot Encoding for Time Series Features. Cyclical encoding provides your model with the same information using significantly less features.

A few weeks ago, I had an MRI scan. That’s when it occurred to me to wonder how complicated it would be to evaluate MRI images with the help of AI. I had always thought that this was a complex…

The embedding model is a critical component of retrieval-augmented generation (RAG) for large language models (LLMs). They encode the knowledge base and the query written by the user.

While hierarchies are a familiar concept with data, some sources deliver their data in an unusual format. But what happens when we get pre-aggregated values?