Data is the foundation of how today’s websites and apps function. Items in your shopping carts, comments on all your posts, and changing scores in a video game are examples of information stored…

One of the compelling aspects of utilizing a large language model lies in its capacity to effortlessly construct a personalized chatbot and leverage it to craft your very own chatbot tailored to…

Ever since the introduction of BERT in 2019, fine-tuning has been the standard approach to adapt large language models (LLMs) to downstream tasks. This changed with the introduction of LoRA (Hu et al…

A practical review of applying Differential Privacy to Federated Learning in the NVIDIA Flare framework...

We are glad to say this was a week for Open-Source AI and small LLMs, with the release of LLama 3 by META and Microsoft’s announcement of Phi-3. LLama 3 is a big win for open-source and cheap and…

Every week, several top-tier academic conferences and journals showcased innovative research in computer vision, presenting exciting breakthroughs in various subfields such as image recognition…

Challenges and solutions of developing interpretable and explainable neural networks for ethical AI, addressing GDPR compliance, transparency, and accountability in machine learning.

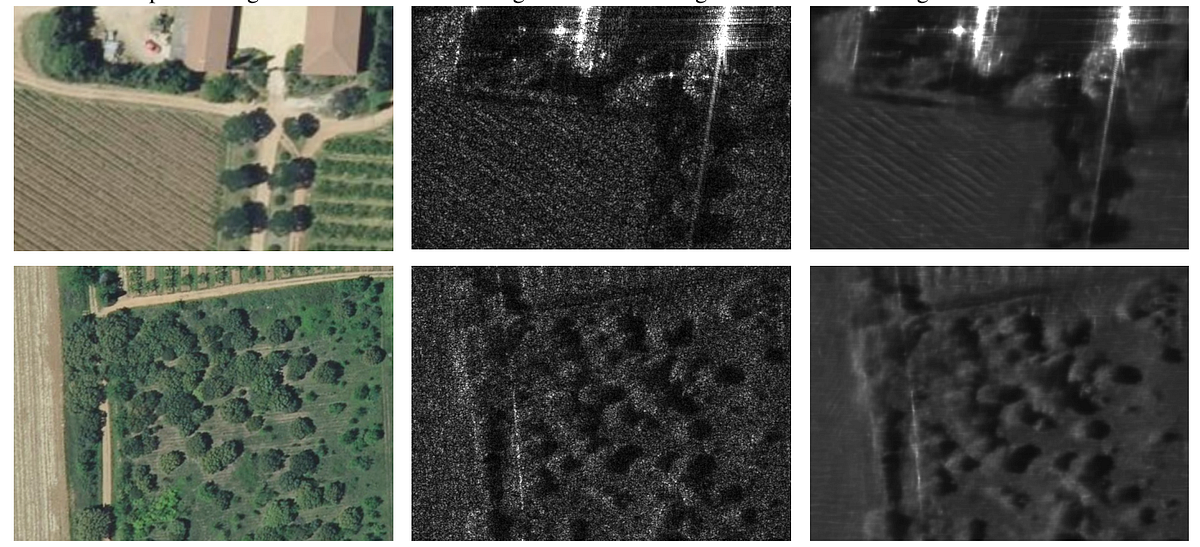

Synthetic aperture radar (SAR) images are widely use in a large variety of sectors (aerospace, military, meteorology, etc.). The problem is this kind of images suffer from noise in their raw format…

If you’re seeking a beginner-friendly guide to Neural Style Transfer, you’ve come to the right place! In this article, we’ll break down the concept of Neural Style Transfer using simple illustrations…

Many LLMs, particularly those that are open-source, have typically been limited to processing text or, occasionally, text with images (Large Multimodal Models or LMMs). But what if you want to…